Architecture determines chatbot reliability, scalability, and business value

Architecture defines how your AI systems behave under pressure. It’s the foundation of reliability, speed, and long-term value. When done right, architecture makes a chatbot dependable, secure, and adaptable. When done poorly, it creates fragility, bots that crash under load, leak data, or require full rebuilds when business processes evolve.

Chatbots must connect seamlessly to a company’s digital ecosystem, data sources, identity systems, and workflows. This isn’t a cosmetic choice; it shapes how responsive the system is and how fast it scales. A well-built architecture separates components like retrieval logic, orchestration, and inference layers, preventing issues in one area from spreading across the system. It also preserves security by isolating access control and data handling.

Executives evaluating long-term AI investments should focus less on model branding and more on structure. The architecture outlasts any current large language model (LLM). A modular setup allows businesses to switch out underlying models without losing integrations or data governance. This flexibility protects the investment and ensures the chatbot evolves with both customer needs and advances in AI technology.

Strong architecture also means lower operational cost. It cuts down on maintenance, simplifies scaling, and reduces downtime. When designed to scale independently across layers, the system can handle heavy traffic or new use cases without performance degradation. Ultimately, reliable architecture turns AI from an experimental project into a sustainable business asset.

Integration depth defines operational value

Integration is where chatbots stop being toys and start driving business outcomes. A chatbot fully connected to your existing IT, CRM, and enterprise systems doesn’t just talk, it acts. It can check order status, update records, create support tickets, or pull real-time data from a knowledge base. When your chatbot executes meaningful business actions, it stops being a front-end interface and becomes a genuine operational tool.

Deep integration builds trust and speed in operations. When every exchange ties into accurate, up-to-date business data, the chatbot delivers dependable results. APIs make this possible, they are the bridge between AI systems and core company databases. Centralized authentication ensures that only authorized users can access specific functions, ensuring data remains secure while enabling automation.

For leaders, integration should be viewed as a growth multiplier, not a line item. Businesses that commit to deep system connectivity unlock measurable gains in efficiency and decision speed. These integrations don’t just eliminate manual steps; they create new ways of interacting with systems, from reporting to transaction execution.

The goal is to create a chatbot capable of handling dynamic workflows across departments. A bot that connects with ITSM can automate incident creation. Integrated with CRM, it personalizes interactions by referencing customer history. Connected to e-commerce platforms, it processes transactions or updates carts in real-time.

Integration depth determines whether your chatbot is a simple Q&A interface or a core part of your digital operations. The companies getting ahead are the ones treating integration not as a feature, but as the strategic backbone of intelligent automation.

A project in mind?

Schedule a 30-minute meeting with us.

Senior experts helping you move faster across product, engineering, cloud & AI.

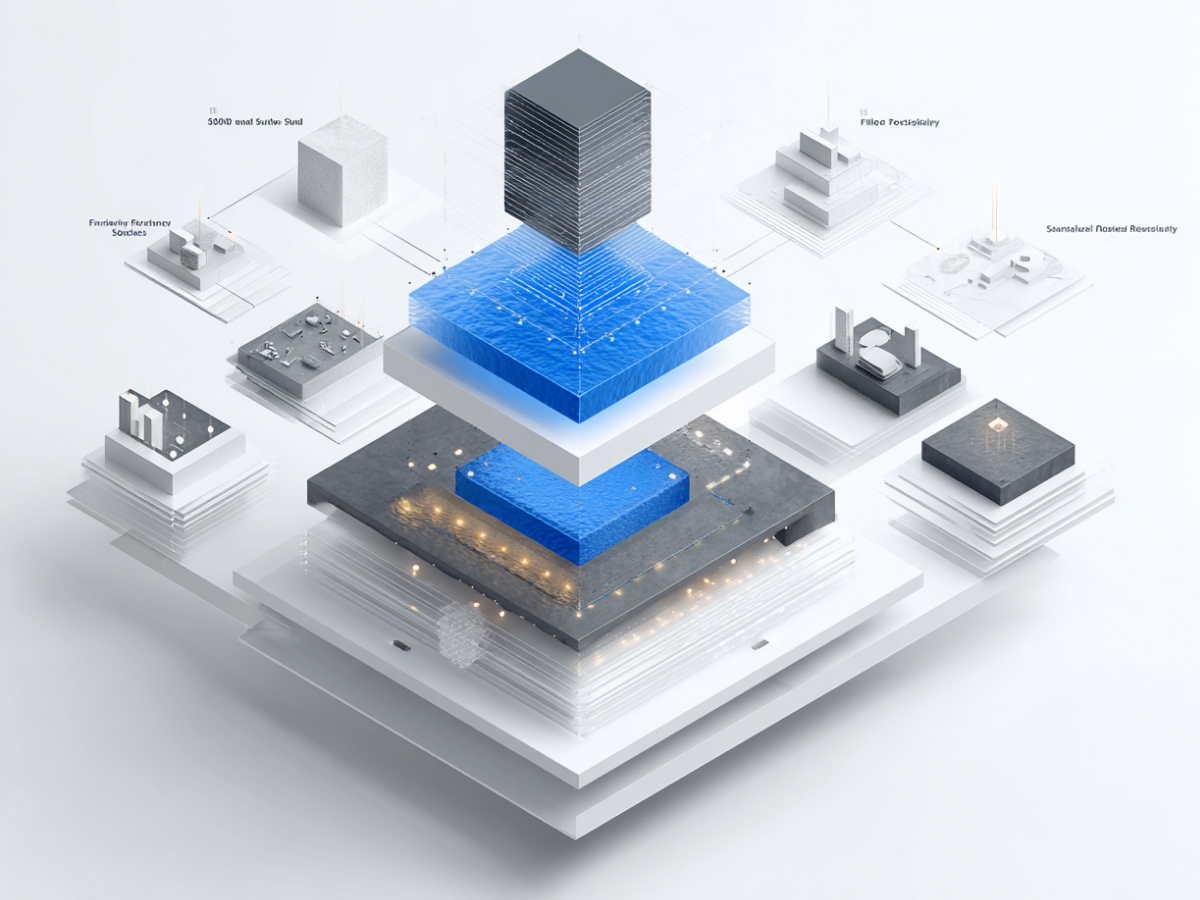

Enterprise chatbots require layered, modular architecture

Layered, modular structure is what keeps AI systems reliable as they expand. When different parts of the chatbot have defined roles, interface, orchestration, AI, data, and integration, teams can upgrade or replace one layer without disrupting the entire system. This separation makes scaling efficient and lowers the risk of downtime during maintenance or feature updates.

The Interface Layer manages all user interaction channels. Whether conversations happen on a website, a mobile app, or a messaging platform, this layer ensures a consistent experience. It handles input, displays responses, and manages state to keep conversations flowing naturally.

The Orchestration Layer coordinates systems and tasks. It decides what needs to happen when a user asks a question or makes a request. By routing workflows across specialized modules, it ensures logic stays organized while minimizing errors from miscommunication between components.

The AI Layer handles the intelligence, understanding user intent and generating responses. Cost-aware routing directs simple requests to smaller models while reserving advanced models for complex reasoning. Techniques like self-checking help address hallucinations, keeping responses accurate.

The Data Layer ensures information is current and precise. It integrates internal documents, CRM data, and knowledge bases through retrieval mechanisms such as vector search. Retrieval-Augmented Generation (RAG) combines real-time data with AI reasoning to create context-grounded responses.

Finally, the Integration Layer connects external business applications through APIs and workflows. This turns conversational systems into operational engines capable of real action.

For executives, adopting this structure means gaining control and scalability without chaos. Each layer can be optimized, monitored, and improved independently. This architecture ensures long-term adaptability, making it possible to keep pace with organizational growth and shifting customer demands.

Four major architectural patterns define chatbot design choices

The way a chatbot’s architecture is designed determines how fast it can deploy, how deeply it integrates, and how well it adapts. Four dominant patterns guide these strategies, SaaS-based, Retrieval-Augmented Generation (RAG), Fully Custom, and Modular/Adaptable. Each addresses different needs and levels of control.

SaaS Architecture focuses on speed. Platform-based chatbots let businesses deploy systems quickly, often with built-in RAG features, multi-tenant design, and pre-configured integrations. These are ideal for straightforward use cases such as customer service or lead qualification. The limitation is clear: restricted customization, limited backend access, and potential compliance risks when sensitive data is involved.

RAG-Based Architecture bridges knowledge and flexibility. It grounds chatbot answers in real organizational data rather than static training models. It retrieves relevant documents using vector databases, then generates responses with that context. This structure delivers precision and transparency through cited sources. Managed tools like Amazon Bedrock Knowledge Bases already provide frameworks for this design, balancing control and simplicity.

Fully Custom Architecture gives enterprises complete autonomy. It suits organizations in regulated industries or those handling proprietary data requiring tight governance. These systems are built using specialized components, embedding models, vector databases such as Pinecone, and orchestration frameworks like LangChain. The trade-off is higher engineering and maintenance investment.

Modular or Adaptable Architecture is emerging as the best long-term design. Its components operate independently but communicate through well-defined protocols. This allows horizontal scaling, easy maintenance, and fast integration of new models or features without system-wide risk.

For executives, architectural choice is strategic, not technical. SaaS systems accelerate deployment for basic use. RAG-based systems offer balance between speed and data control. Custom frameworks maximize independence at higher cost. Modular architectures combine flexibility with resilience, providing freedom to adapt without rebuilding from zero.

Choosing the right pattern means aligning technology with business goals, delivering performance today and adaptability tomorrow.

Architecture decisions should reflect core business factors

Strong architecture begins with clarity on business priorities. Every design choice should align with how the organization operates and grows. Four critical factors shape these decisions: use case complexity, data sensitivity, integration depth, and performance requirements.

Use case complexity defines how advanced the architecture must be. A chatbot built for FAQs and simple inquiries doesn’t need the same processing framework as one supporting customer service, troubleshooting, or product recommendations. Multi-use bots require orchestration that separates logic for each activity so responses remain precise. Parameters like temperature settings and token limits must adjust with intent, keeping factual answers crisp and creative tasks dynamic. Only modular designs can handle this variation effectively without producing inconsistent performance.

Data sensitivity determines how information is stored, accessed, and protected. Businesses handling financial records, healthcare data, or personally identifiable information must comply with privacy regulations such as GDPR and CCPA. Architectures that support encryption, role-based access, and data minimization set the foundation for trust and compliance. SaaS solutions may fall short here because their data retention policies often include shared processing environments. In such cases, custom or hybrid systems offer clearer data residency and control.

Integration requirements influence whether the chatbot is passive or powerful. Shallow integrations enable only information retrieval. Deep, bidirectional integrations allow real-time updates, new ticket creation, workflow automation, and transactional execution. These capabilities turn conversational AI into a platform that enhances both user satisfaction and operational performance.

Performance and scaling needs must be defined early. Traffic surges, concurrent sessions, and evolving workloads demand architectures that scale automatically and maintain response speed. Cloud-native deployments using containers and load balancing ensure consistent user experience during peak activity. Ignoring scale leads to unstable performance and reputational risk once adoption grows.

For C-suite leaders, these factors form a checklist for decision-making. Every architectural investment should first pass through these four lenses. When architecture maps directly to business reality, complexity, compliance, connectivity, and capacity, AI systems transition from pilots to essential enterprise tools.

Common architectural mistakes undermine chatbot success

Most chatbot failures can be traced to design assumptions made early in planning. The main reasons include unclear objectives, shallow integration, and ignoring scalability from the start. These issues weaken performance and increase operational costs.

The first mistake is launching without defined use cases. Many teams begin by selecting models before identifying what business problems the chatbot should solve. This results in inefficient systems with overlapping functions and no clear outcomes. Documenting three to five specific intents before development keeps the bot focused and measurable. Common high-return cases include customer service, marketing automation, and HR task management, areas already showing proven ROI and cost savings.

The second mistake is neglecting integration planning. Chatbots disconnected from key systems such as CRM or order management can only provide surface-level assistance. They fail to resolve issues that require access to underlying data. Proper integration planning includes authentication, data transformation, and API mapping at the architectural stage, not after deployment. Projects that ignore this often incur hidden infrastructure and maintenance costs later, making them operationally inefficient.

The third mistake is overlooking scalability. Static systems can serve early users but collapse during peak traffic or rapid growth. Response delays, timeouts, and dropped sessions damage user trust. Continuous load testing and automated monitoring of metrics such as response time, retention, and completion rates reveal weaknesses before they affect customers. Scale should be designed in from the beginning, using microservices and performance metrics to ensure growth readiness.

These failures are preventable. Building with clear objectives, real integrations, and scalable frameworks leads to measurable results. According to industry research, chatbots implemented with solid architecture have already replaced 36% of U.S. customer service tasks, demonstrating their potential when executed with precision and foresight. For executives, that’s the difference between a system that enhances operations and one that becomes technical debt.

Flexible, adaptive systems are replacing rigid chatbots

The chatbot space is moving away from fixed, rules-based systems toward adaptive designs that interpret intent in real time. Static chatbots rely on predetermined scripts. Once users deviate, they fail to deliver meaningful answers. Adaptive chatbots analyze language, intent, and context as they interact, producing responses that are relevant even under new conditions.

This shift is driven by business needs for systems that evolve without constant manual updates. Adaptive chatbots learn from ongoing conversations, improving accuracy and tone alignment with each exchange. They handle wider query ranges, accommodate regional language differences, and maintain context over longer interactions.

For leaders, investing in adaptive architectures is a way to future-proof operations. These systems use contextual reasoning rather than simple keyword matching, producing higher response accuracy and stronger customer experience metrics. The result is not only smoother automation but also a system that keeps pace with dynamic business communication patterns.

Flexibility is as much about resilience as it is about capability. When customer expectations or workflows change, adaptive systems adjust without forcing complete reengineering. They maintain compatibility with new data sources, tools, and service layers. This ability to self-tune ensures organizations continue to meet user needs as their operational landscapes evolve.

Modular design delivers measurable reliability and maintenance benefits

Modular chatbot architecture separates system functions into self-contained units, creating a foundation for control, performance, and maintainability. Each module operates independently, meaning developers can make targeted updates, troubleshoot specific issues, or scale certain areas without affecting the rest of the system. This structure enhances both reliability and long-term development efficiency.

Decoupling components also reduces operational risk. A failure in one module, such as language processing, does not cascade into other areas like data retrieval or payment execution. This containment turns routine maintenance and updates into predictable processes, keeping live environments stable and responsive.

For executives, modularity translates directly into measurable business efficiency. Teams can accelerate release cycles, respond faster to system issues, and integrate new updates with minimal disruption. It keeps budgets predictable by reducing unexpected maintenance costs and downtime losses.

At scale, modular design enhances performance through horizontal scaling and efficient caching. Businesses can dynamically add processing capacity during traffic peaks or when introducing new capabilities. This ensures the chatbot remains responsive even as customer interaction volume grows.

Strategic architecture selection ensures long-term value

Selecting the right chatbot architecture is a strategic decision with long-term business consequences. The architecture determines how well the system supports ongoing innovation, compliance demands, and scaling goals. A well-chosen architecture adapts to evolving customer needs, integrates seamlessly with enterprise systems, and maintains consistent performance under growth pressures.

Executives should view this choice as more than a technical specification. It defines how the organization will deliver digital experiences for years to come. The key is alignment, choosing an architecture that reflects the company’s operational scale, regulatory environment, and strategic objectives. Businesses focused on rapid expansion benefit from modular or hybrid architectures that scale horizontally and minimize downtime. Highly regulated sectors, on the other hand, should prioritize data control and security through custom or hybrid systems that enforce compliance.

The decision also affects cost efficiency and agility. Systems designed with separation of concerns between data, retrieval logic, and the language model reduce vendor dependency and simplify upgrades. This kind of flexibility lowers future transition costs as technology advances and new platforms emerge. Integration with existing infrastructure remains smooth, and teams can iterate quickly without reengineering critical components.

A stable, adaptable architecture also supports continuous performance optimization. Clear monitoring systems track success rates, system load, and response times, providing executives and technology leaders with transparent performance metrics. With this setup, improvements become data-driven rather than reactive, ensuring predictability in both performance and cost.

For C-suite leaders, the message is straightforward. The architecture determines how far the chatbot can go before it needs replacement. Strategic selection ensures that investment compounds in value, supporting business growth while sustaining competitive agility.

The bottom line

The quality of your chatbot’s architecture determines how far the system can take your business. This isn’t about chasing the latest model; it’s about designing a foundation strong enough to evolve with your operations and market conditions. Architecture defines how your AI scales, secures data, and integrates real business logic, not just how it replies to questions.

For executives, the lesson is direct. Treat architecture as a strategic decision that shapes long-term competitiveness. A modular, integrated, and adaptive framework keeps your systems reliable while allowing innovation at speed. It gives your business control, agility, and measurable return on technology investment.

AI chatbots built with strong architectural discipline don’t just automate, they extend business capability. They move from conversation to action, connecting data, workflows, and decision-making. The organizations that approach this with clarity and foresight will build chatbot ecosystems that adapt, scale, and deliver value far beyond today’s expectations.

A project in mind?

Schedule a 30-minute meeting with us.

Senior experts helping you move faster across product, engineering, cloud & AI.